I suppose this is the biggie. Since 1996 my database of choice has been the venerable NSF http://en.wikipedia.org/wiki/Notes_Storage_Facility#Database which is a Document Oriented Database. Coincidentally the rest of the world has now caught up and there are some more modern options available to achieve the same things now. The real flavour of the moment is MongoDB http://www.mongodb.org, like the NSF it’s document based and can be replicated between multiple locations. Unlike the NSF it is much more scalable and the old limitations that we were constrained by have largely been mitigated. Of course there is no such thing as a silver bullet, but really there is no comparison between the NSF and modern document oriented databases. You have no idea how sad that makes me, but it is true.

Anyway, back to the real world. Every application needs a database, and my choice in this case is MongoDB. As ever, this is not a tutorial, the MongoDB website provides quite reasonable documentation to get you going: http://docs.mongodb.org/manual/tutorial/install-mongodb-on-os-x/

Now, you could just go with Mongo directly, but in various tutorials I had read about MongooseJS as well. MongoDB is just a database, it doesn’t force you to implement schemas, validation, or really any structure to your data. It may well be the case that you don’t want any of that, in which case go for your life. The syntax for talking to MongoDB is very simple REST calls. But with the addition of MongooseJS we get another layer of abstraction and assistance.

The primary boon for me has been the creation of simple schema’s. Each of the document types in my application has a schema defined; I know what fields will be created, I can create default values for optional fields and I can also create server side validation rules in a nice structured way. It’s also really useful to have a standard way of running code every time a document is saved back to the database.

And at this point we have our first venture into the cloud. It’s very easy to get everything we’re talking about running on your local machine, but what about when you want to show it off to the rest of the world? In my case I’m working with Bruce so for him to test we need an environment. There are hundreds of options available to you, but as with all of these posts I’ll describe what I’m doing.

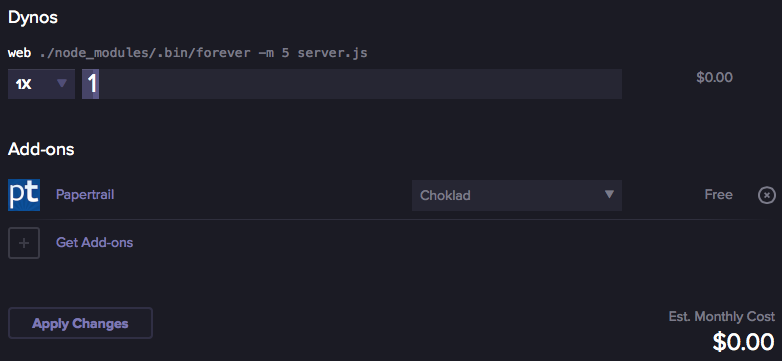

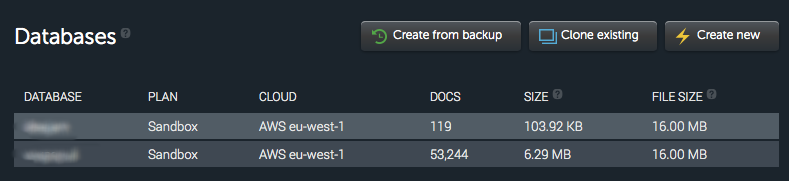

First we have the node.js hosting, where we’re using Heroku. It’s nice and simple to create a development instance that runs on a single dyno and then add plugins for things like console management, monitoring and so on. But we also need somewhere to host the database itself. For this we’re currently using MongoLab which again is very easy to set up a development database instance. The key thing about both of these development environments is that they are free. So I can do a Git push from my repo, and add configuration to the application to point to Mongolab for data storage and suddenly my application is running in the cloud.

This is all worryingly simple isn’t it?